| ||

|  | |

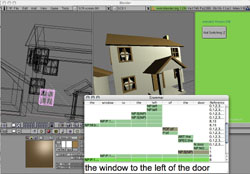

Spatially Grounded Language in 3D Modeling SoftwareIn this project we demonstrate an application of our work in understanding natural language about spatial scenes. All 3D modeling applications face the problem of letting their users interact with a 2D projection of a 3D scene. Rather than the common solutions that include multiple views, and selective display and editing of the scene, we employ our language learning and understanding research to allow for speech-based selection and manipulation of objects in the 3D scene. We demonstrate such an interface based on our Bishop project for the 3D modeling application Blender.Peter Gorniak, Deb Roy |

|

| Related papers:

BISHOP|BLENDER: Spatially Grounded Language Understanding in 3D Modelling Software, Peter Gorniak and Deb Roy, Demo at NAACL 2004. |

|

| quicktime video | |

|

|