| ||

|  | |

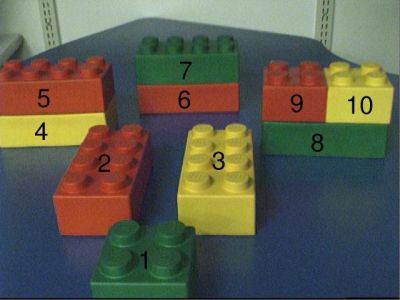

Fuse: Semantically-primed speech recognition using visual contextWe are developing a multimodal processing system called Fuse to explore the effects of visual context on the performance of speech recognition. The basic idea underlying Fuse is that by building a speech recognizer that has also has visual input, visual context may be used to second guess what a person is likely to say, and to use these predictions to improve speech recognition accuracy. To implement this idea, several problems of grounding language in vision (and vice versa) must be addressed. The current version of the system consists of a medium vocabulary speech recognition system, a machine vision system that perceives objects on a tabletop, a language acquisition component that learns mappings from words to objects and spatial relations, and a linguistically driven focus of visual attention. The performance of the system has recently been evaluated with a corpus of naturally spoken, fluent speech. The speech ranged from simple constructions such as "the vertical red block" to more complex utterances such as "the large green block beneath the red block". We have found that our approach of integrating visual context reduces the error rate of the speech recognizer by over 30%. We are currently investigating implications of this improved recognition rate on the overall speech understanding accuracy of the system. This work has applications in contextual natural language understanding for intelligent user interfaces. For example, in wearable computing applications, awareness of the users physical context may be leveraged to make better predictions of the users speech to support robust verbal command and control. Niloy Mukherjee, Deb Roy |

|

|

|